AI Can Design Everything Except What Matters

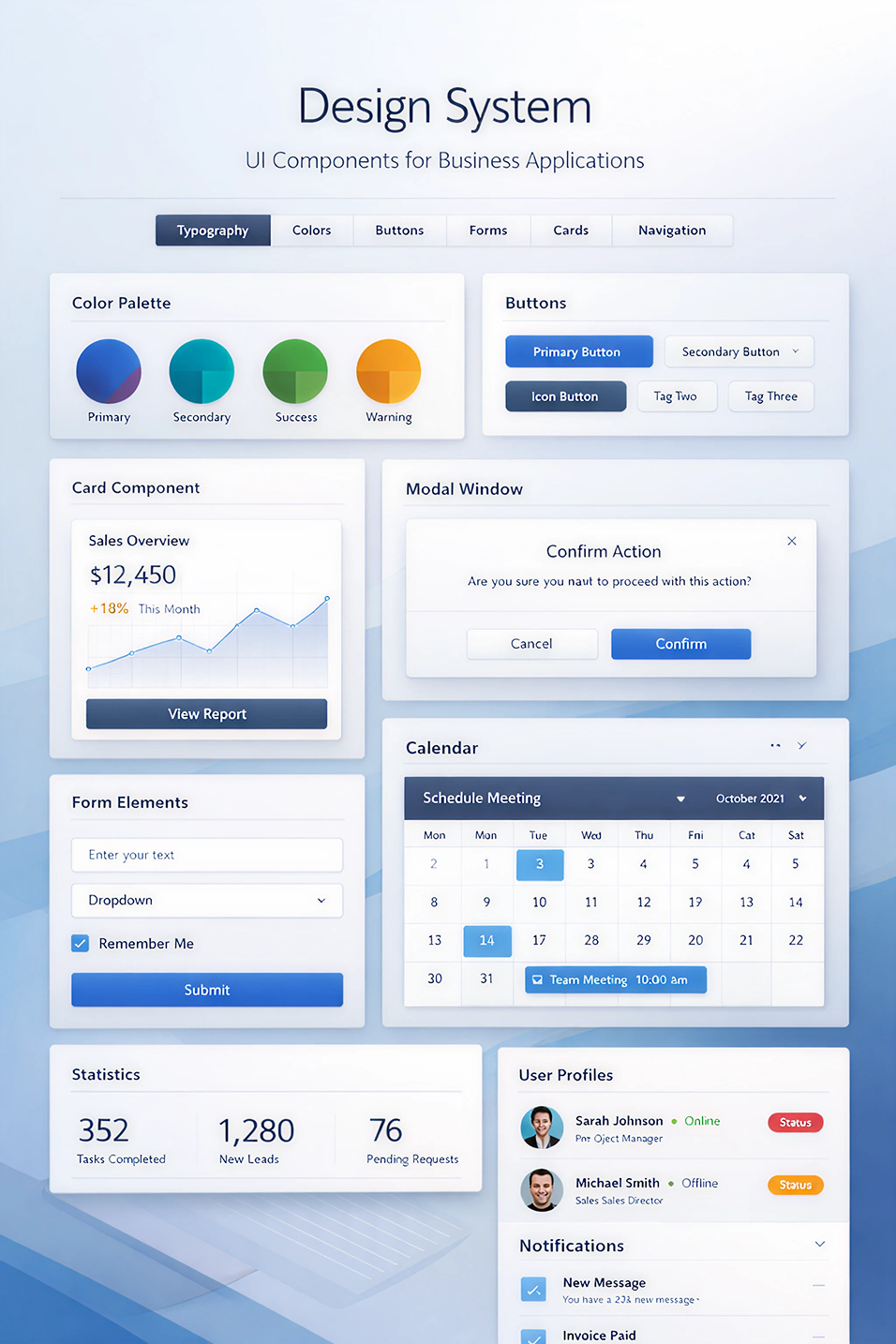

I created this design system image in just a couple of minutes using ChatGPT. It's debatable whether it's really that good, but that's not my point. The point is it was created quickly and is probably good enough for many applications.

I created this design system image in just a couple of minutes using ChatGPT. It's debatable whether it's really that good, but that's not my point. The point is it was created quickly and is probably good enough for many applications.

Should I be Worried

A designer friend of mine recently asked me, "Should I be worried?"

I'm a product leader and designer on our AI team at Vivint, and I get to watch AI tools get built and get better every week. I also watch designers do things every day that AI can't touch. Both things are true at the same time, and the tension between them is worth being honest about.

The Uncomfortable Truth

Here's the thing. AI can design now. Not "sort of design" but actually design.

One YouTube designer I saw built a full brand identity in less than two hours using only ChatGPT. Logo, mood board, packaging, website, all of it. Work that used to take days or weeks, done in a matter of hours.

We all know this story by now, and the job market is reflecting the shift. I just looked this up. The Bureau of Labor Statistics projects only 3% growth for graphic design over the next decade. One estimate puts it bluntly: AI will be good enough for roughly 75% of companies. The remaining 25% will still need human designers, but that's a much smaller profession than the one most of us entered.

If you're a designer reading this, you don't need me to tell you the ground is shifting. You can feel it.

The Build Trap

There's a lot of advice out there peddling AI education. Learn AI, blah blah blah. I'm not saying there isn't any value in learning tools to make you more efficient. Frankly, the logic sounds airtight. If AI is the future, become the person who's best at using it.

I think learning AI is necessary and becoming table stakes. But it's not a strategy that will differentiate you.

Here's the problem. If you sell yourself as fast, you're competing on the one thing AI does better than any human: being fast. Basically, you're running harder on a track that keeps getting shorter.

The tech industry is living this right now. Dozens of companies have raised hundreds of millions of dollars building AI app builders. Most of them are thin wrappers on top of the same models. Their moat is about as deep as the time it takes to copy a UI, which is roughly a week. The ones that survive will own something the models can't give them.

The same goes for careers. Fluency with AI tools is table stakes, not a differentiator. The real question is this. What do you own that still matters when AI gets ten times better?

What I Learned Building Game Loop

I've been building a side project for the past month. It's a self-improving game design platform where AI agents generate games, playtest them with synthetic users, and evaluate the results. The dream was a fully autonomous loop. AI builds a game, AI plays it, AI tells me whether it's fun.

The synthetic users look impressive. They play the games, log feedback, and score them on pacing, challenge, and engagement. The numbers look great.

The funny thing is, so many of the games they score high just aren't very fun.

I sit down with them and think, oh, this is too hard, or this isn't engaging. The AI playtesters are optimizing for things that look like fun on paper but don't translate to the human experience of actually wanting to keep playing. They miss the moments where a mechanic clicks. They score things highly that feel flat. They flag the most interesting parts as problems.

The fix is putting a human back in the loop. And the human feedback isn't analytical. It's intuitive

"This feels good."

"This drags."

"I want to keep going."

That kind of judgment isn't something AI could derive from its training data. It has to come from someone who knows what fun feels like from the inside.

That experience changed how I think about AI's role in creative work. It's a phenomenal generator. It's a phenomenal iterator. But it can't tell you what's worth keeping. A human still has to do that.

The Benevolent Dictator

There's a concept from the AI evaluation world I keep coming back to. The benevolent dictator. It's the human in the system who decides what good looks like. The one who turns fuzziness into clarity. The one who makes the judgment calls the AI then learns from and scales.

Another side project I've been working on for years made this concrete for me. It's called Eddee, and the idea has been to build an AI-assisted essay grading tool for teachers. Teachers spend enormous amounts of time grading. If we could get AI to do it well, we could give teachers their lives back.

The first version graded essays the way you'd expect an AI to grade essays. Technically competent. Aware of grammar and structure. And completely tone deaf to what teachers actually care about. Teachers don't grade like a robot. They look for voice. They notice when a student is wrestling with an idea instead of just hitting the word count. They catch the moment a kid is starting to find their own way of saying things. They're flexible because they have the context of their students and their lives. The AI misses all of it. Frankly, standardized tests miss that too, but I don't have time to dive into that problem in this post.

What I've been working on with Eddee, and what I've been finding really valuable, is that instead of asking the AI to grade on its own, I've been asking the teacher to teach the AI what good looks like. The teacher becomes the benevolent dictator. They define the rubric. They show examples of what they value and why. The AI learns their standards and applies them consistently across every essay in the class.

That's a completely different relationship with AI than "the AI does the work." The teacher's judgment isn't replaced. It's amplified. Their standards, applied at scale, in a way they never could on their own.

This might not be a permanent moat. Maybe AI eventually gets good enough to figure out those values without a human teaching it. But right now, in this moment, the benevolent dictator role is real and it's irreplaceable. And it's exactly the kind of work designers and product managers are uniquely good at. We're trained to figure out what matters to humans and translate that into systems.

The Speed of Trust

Years ago, Stephen M.R. Covey wrote a book called The Speed of Trust. The core idea is simple. Trust acts like a tax or a dividend on everything you do. High trust speeds you up and lowers cost. Low trust slows you down because you have to bolt on checks and balances to make up for what's missing.

That idea matters more now than ever, because AI is best at exactly the things that erode trust the fastest. Generation at scale. Confident answers that are sometimes wrong. Deepfakes. Synthetic content that looks legitimate. We're flooding the world with stuff that looks trustworthy and isn't.

In that environment, the people who know how to build trust become more valuable, not less. Trust isn't a feature you ship. It's the result of a thousand small human decisions. The weight of a button. The pacing of an onboarding flow. The tone of an error message. The white space that says "we're not trying to trick you." Those decisions require understanding how humans feel, what makes them anxious, and what makes them confident. AI doesn't feel anxiety. It doesn't know what trust feels like from the inside.

The benevolent dictator isn't slowing AI down. The benevolent dictator is the reason AI can move fast without breaking the trust it depends on.

To My Design and Product Friends

Use the tools. Don't resist AI out of principle. Learn what it's good at and what it's bad at. Make it your execution engine.

Don't make the tools your identity. The build is getting cheaper. Your value isn't in how fast you can produce. It's in knowing what's worth producing.

Become the benevolent dictator. Get good at defining what good looks like. Get good at translating fuzzy human values into rules a system can learn from. That's the work AI can't do without you, and it's the work that compounds over time.

Remember what the job actually is. You're not making things look good. You're making people feel something. Safe. Confident. Delighted. Understood. That's a human-to-human skill. AI can copy the artifact. It can't copy the understanding.

The design profession is going to be smaller. The people still in it are going to be better and better compensated. The bar is moving from "can you use Photoshop, Figma, etc." to "can you define what good looks like, and can people trust what you build."

That's not a death sentence for design. It might be the best thing that ever happened to it.