Self Learning Systems

Two loops

I built a thing that makes games while I sleep.

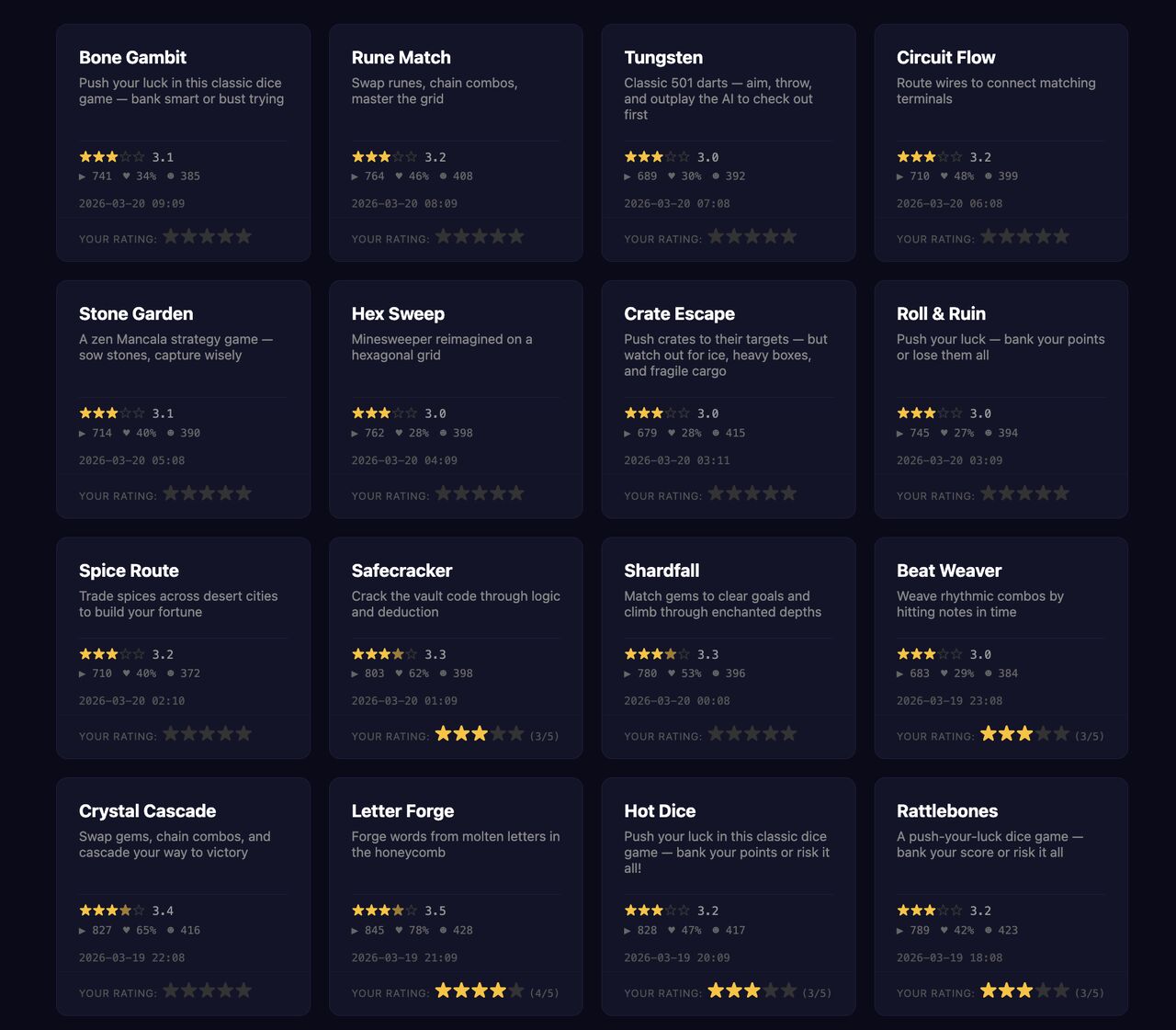

It started as a weekend experiment. I wanted to see what would happen if I set up an AI to generate mini games, had synthetic users play them and rate them, fed the results back into the system, and let it run. Every 15 minutes, it would build a new game, test it, learn from the results, and try again. I turned it on before bed and let it run for 24 hours.

By morning I had 96 games. Most of them were bad. But the ones from hour 18 onward were noticeably better than the ones from hour 1. Not because I touched anything overnight. The system figured out what worked by watching its own players react.

That experience taught me something I've been thinking about ever since.

The fast loop

What I built is a self-learning system. The pattern is simple: generate something, evaluate it, learn from the evaluation, generate the next thing slightly better. Researchers call this the Generator-Verifier-Updater loop. In game design, it's just the build-test-learn cycle that every designer knows. The difference is speed. What normally takes weeks of playtesting, I compressed into 15-minute intervals.

The synthetic users were the key. They played each game, scored it on a few dimensions, and that feedback shaped the next iteration. The system didn't need me to tell it what was fun. It could observe patterns across dozens of attempts and start converging on designs that held attention longer, had better pacing, felt more satisfying to complete.

I wrote about the three building blocks of a real agent a few weeks ago, and one of them was the heartbeat: the idea that an agent should wake up on its own and do things without being prompted. This project took that concept and pointed it inward. The heartbeat wasn't reminding me to take my meds. It was iterating on its own work.

The part that's missing

Here's where it gets interesting. The fast loop works. It produces improvement. But it has a ceiling.

The synthetic users can tell you whether a game holds attention. They can score pacing and completion rates. But they can't tell you whether a game is surprising. They can't tell you it reminds them of a mechanic from an old board game they loved, or that the color palette feels off in a way that's hard to articulate. They optimize for measurable signals, which means the system slowly converges on games that score well without being genuinely interesting.

This is the same problem showing up everywhere AI evaluates AI. Research out of NeurIPS showed that LLM judges have a self-preference bias: they rate their own outputs higher than equivalent alternatives. In expert domains, human evaluators agree with AI judges only about 60-70% of the time. The gap is exactly where taste lives.

I ran into this with content creation at work too. We built a system where AI generates content, an LLM evaluates it for brand and safety, and the output gets delivered. It works. But without a human periodically reviewing the output and feeding corrections back into both the generator and the evaluator, the system drifts toward safe and forgettable. The AI knows what's correct. It doesn't know what's good.

Two loops, not one

The architecture that actually works has two loops, not one.

The inner loop is fast and autonomous. Generate, evaluate, learn, repeat. This is the engine. It runs every 15 minutes, or every hour, or continuously. It handles volume and iteration speed. For my game project, this was the overnight loop that produced 96 games.

The outer loop is slow and human. You play the games yourself. You read the content. You look at what the system thinks is its best work and you tell it where it's wrong. Critically, your feedback goes to two places: it changes what gets generated next and it changes what the evaluator considers good. You're not just correcting output. You're calibrating the system's sense of quality.

The human doesn't need to be in every cycle. That's the whole point. The inner loop runs on its own between human check-ins. But the check-ins are what keep the system from converging on mediocrity.

Years ago I wrote about how deliberate practice made me a better Netrunner player. The core idea was the same: focused, iterative cycles with honest feedback. The difference is I was the one practicing. Now I'm building systems that practice on their own, and my job is to be the coach who steps in periodically to adjust the form.

What I'm doing with it next

The 96-game experiment was a proof of concept. What I actually want is to apply this to a single game I'm building: not generating lots of throwaway games, but using the same two-loop architecture to refine one design over hundreds of iterations.

The inner loop would test variations on mechanics, level design, difficulty curves. The synthetic users would play each variation and report what worked. The outer loop would be me, playing the best candidates, telling the system what it's missing, recalibrating what "good" means for this particular game.

Honest question: how much of the creative process can live in the fast loop, and how much requires the slow one? I don't think the answer is fixed. I think you start with heavy human involvement and gradually let the inner loop take on more as the system learns your taste. But I also suspect it never fully graduates. The drift is always there, pulling toward the average, and someone has to keep pulling it back toward the specific, the surprising, the thing that only a human would notice is missing.

The engine runs on its own. The compass needs a hand on it.